Reciprocal Rank Fusion (RRF) combines BM25 and vector search rankings into one list using a single formula. Learn how RRF works, why k=60, and when to use it.

Reciprocal Rank Fusion (RRF) is a rank aggregation algorithm that merges results from multiple retrievers - typically a keyword (BM25) ranker and a vector (dense embedding) ranker - by summing the reciprocals of each document's rank in each result list. It was introduced by Cormack, Clarke, and Büttcher in their 2009 SIGIR paper, Reciprocal Rank Fusion outperforms Condorcet and individual Rank Learning Methods. Because RRF operates on rank positions instead of raw scores, it has become the default hybrid search ranking method in OpenSearch, Elasticsearch, Azure AI Search, MongoDB Atlas, and Weaviate.

The formula is one line:

RRF_score(d) = Σ_r 1 / (k + rank_r(d))

with k = 60 as the typical default. The rest of this guide explains where the formula comes from, why k=60 works, how RRF compares to score normalization methods, and how to wire it up across the major search engines.

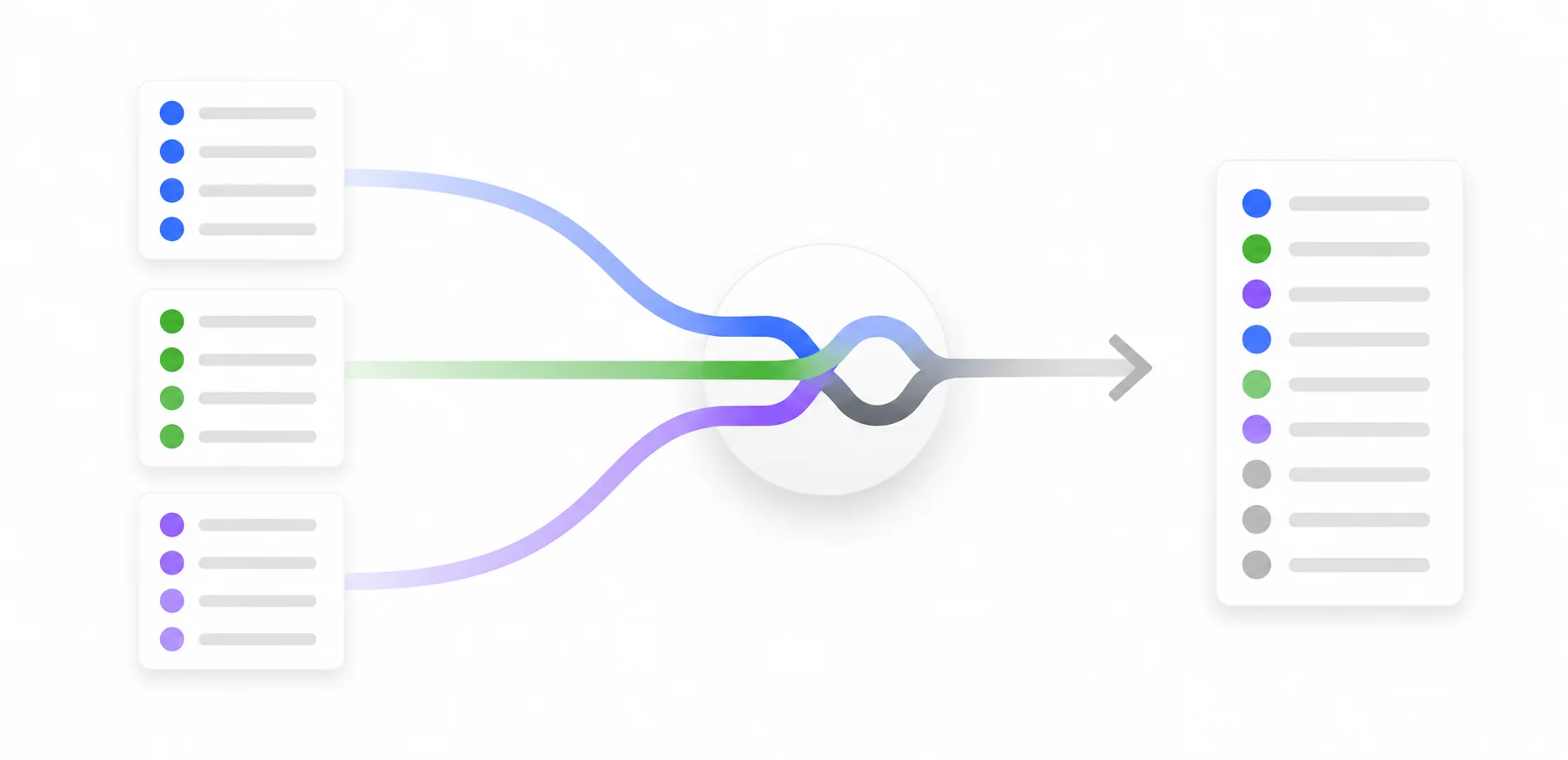

What Reciprocal Rank Fusion Actually Does

Hybrid search has a problem that score-based fusion can't solve cleanly: BM25 returns unbounded positive scores influenced by query length and term statistics, while cosine similarity over normalized embeddings returns values in [-1, 1]. The two distributions don't share an axis. Adding them up, even after rescaling, gives the larger-magnitude side an unearned advantage.

RRF sidesteps the comparability problem by ignoring scores entirely and looking only at rank positions. Each retriever votes for a document by its rank; the votes are inverse-weighted (rank 1 counts more than rank 50), and the votes are summed. A document that ranks well in both lists rises to the top. A document that ranks #1 in only one list still loses to a document at rank #3 in both, which is the desired behavior in most production hybrid search.

The 2009 paper that introduced RRF reported that this trivial-looking method beat every individual learned ranker on the LETOR 3 benchmark, with statistical significance at p < 0.003 - the best published result on that benchmark at the time. That a single hyperparameter approach outperformed trained learn-to-rank models is the historical reason the technique stuck.

Why score-based hybrid search keeps breaking

Score normalization sounds straightforward and fails in subtle ways. Min-max rescaling maps each retriever's scores to [0, 1] using (s - min) / (max - min), and a single outlier score in either list compresses everything else into a narrow band. L2 normalization divides by the score vector's L2 norm; it's better behaved on long-tail distributions, but the result still depends on how many documents you return and on query length.

Both methods assume that, after rescaling, scores from different retrievers mean roughly the same thing. They don't. BM25 confidence at rank 1 isn't the same kind of quantity as cosine similarity at rank 1. Rank-based fusion makes that mismatch a non-issue.

The Formula, Step by Step

The mathematical definition is:

RRF_score(d) = Σ_{r ∈ R} 1 / (k + rank_r(d))

where R is the set of retrievers, rank_r(d) is the 1-indexed position of document d in retriever r's output, and k is a smoothing constant. Documents that don't appear in a retriever's list contribute 0 (or are skipped) for that retriever.

Three numbers tell you most of what you need about the shape of the function at k = 60:

- rank 1 -> 1 / (1 + 60) ≈ 0.01639

- rank 10 -> 1 / (10 + 60) ≈ 0.01429

- rank 100 -> 1 / (100 + 60) = 0.00625

The contribution from rank 1 is only about 15% larger than from rank 10. By rank 100 the contribution is roughly 38% of the rank-1 contribution. That gentle decay is the whole point: RRF rewards consistency, not pole position.

A worked example

Three documents A, B, and C come back from BM25 and a dense vector retriever:

| Document | BM25 rank | Vector rank |

|---|---|---|

| A | 1 | 50 |

| B | 5 | 3 |

| C | 100 | 100 |

At k = 60:

- A: 1/(1+60) + 1/(50+60) = 0.01639 + 0.00909 = 0.02548

- B: 1/(5+60) + 1/(3+60) = 0.01538 + 0.01587 = 0.03125

- C: 1/(100+60) + 1/(100+60) = 0.00625 + 0.00625 = 0.01250

Document B wins, even though A is the BM25 #1 result. B ranks well in both lists; A ranks high in one and mediocre in the other. C is present in both but deep in each, so it lands last. This is the behavior you want from a hybrid ranker.

Why k = 60

k controls how aggressively top ranks are weighted. Small k (try k = 1 and rerun the example) blows up the gap between rank 1 and rank 2; large k flattens the curve. Cormack et al. tuned k = 60 on TREC data and reported it generalized well across collections. Subsequent benchmarks across information retrieval and modern hybrid search consistently land in the k ∈ [40, 80] range with similar quality. Most production systems ship k = 60 as the default and let you tune it.

For high-precision, head-of-list workloads (e-commerce result pages, knowledge base lookup), a smaller k in the 40-60 range tends to win. For recall-oriented workloads where you'll feed the top-50 to a downstream reranker or LLM, larger k (60-100) keeps the long tail from getting flattened too aggressively. If you have judged queries, sweep k ∈ {10, 30, 60, 100} and measure NDCG@10 and Recall@k - the curve is usually flat between 40 and 80.

RRF vs. Score Normalization

| Method | Operates on | Robust to score outliers? | Tuning surface | Where you find it |

|---|---|---|---|---|

| RRF | Ranks | Yes | Single k |

OpenSearch, Elasticsearch, Azure AI Search, MongoDB Atlas, Weaviate |

| Min-max | Normalized scores | No | Per-retriever weights | Some legacy hybrid configurations |

| L2 normalization | Normalized scores | Partial | Per-retriever weights | OpenSearch normalization processor (alternative to RRF) |

| CombSUM / CombMNZ | Normalized scores | Partial | Weights, hit counts | Mostly research and historical |

CombSUM and CombMNZ are the predecessors RRF largely displaced. Fox & Shaw introduced them in 1994: CombSUM sums normalized scores across retrievers, CombMNZ multiplies that sum by the number of lists a document appears in. They were the standard before Cormack 2009 demonstrated that ranks alone do better than normalized scores.

There's a real case for score-aware fusion: when both retrievers produce well-calibrated scores on the same scale (rare in practice), or when you need to weight retrievers asymmetrically by score magnitude rather than rank position. OpenSearch's own benchmarks comparing RRF against tuned score normalization across six datasets found RRF roughly 3.86% lower on NDCG@10 with 1-2% latency improvements at p50/p90/p99. That tradeoff - small quality cost for stability and lower operational overhead - is why RRF is the default rather than the only option.

RRF in Practice Across Search Engines

The implementations differ in surface API but compute the same formula. Version notes below reflect when RRF was introduced as a documented feature; check vendor docs for current GA status.

OpenSearch

OpenSearch ships two hybrid-fusion processors that you wire into a search pipeline: the older normalization-processor (min-max or L2 normalization, then weighted combination) and the newer score-ranker-processor (RRF). RRF was added in the 2.19 release and is available in 3.x. Both attach to the same hybrid query construct, which takes an array of sub-queries — typically a BM25 match and a neural or knn clause — and returns a single ranked list.

You configure RRF as a phase-results processor in a pipeline:

PUT /_search/pipeline/hybrid-rrf

{

"description": "Post processor for hybrid RRF search",

"phase_results_processors": [

{

"score-ranker-processor": {

"combination": {

"technique": "rrf",

"rank_constant": 60

}

}

}

]

}

rank_constant defaults to 60 if omitted. Once the pipeline exists, attach it per-request with the search_pipeline parameter, or mark it the index default via index.search.default_pipeline so every hybrid query on that index goes through RRF without changing the client:

POST /my_index/_search?search_pipeline=hybrid-rrf

{

"query": {

"hybrid": {

"queries": [

{ "match": { "title": "wireless headphones" } },

{ "neural": { "embedding": { "query_text": "wireless headphones", "model_id": "<model-id>", "k": 50 } } }

]

}

},

"size": 10

}

The hybrid query is what makes the sub-results addressable to the processor — a regular bool/should won't work, because the processor needs each sub-query's ranked list separately to compute reciprocal ranks.

Choosing between the two processors is the live design decision in OpenSearch. The normalization-processor gives you per-sub-query weights ("weights": [0.3, 0.7]) and lets you tune the relative pull of lexical vs. semantic results, which matters when one side is systematically stronger on your corpus. RRF gives up that knob in exchange for not having to keep weights calibrated as embeddings, analyzers, or corpus distributions drift. The OpenSearch team's own six-dataset benchmark — referenced above — quantifies the tradeoff: RRF is slightly behind on raw NDCG@10 but faster at every percentile and immune to score-distribution drift. For most production hybrid workloads on OpenSearch, RRF is the right default; reach for the normalization processor when you have judged data showing one retriever should dominate. See the OpenSearch RRF announcement for full request bodies.

Elasticsearch

Elasticsearch added the rrf retriever in the 8.14 timeframe and made the retrievers framework generally available in 8.16. The shape:

GET /products/_search

{

"retriever": {

"rrf": {

"retrievers": [

{ "standard": { "query": { "match": { "title": "wireless headphones" } } } },

{ "knn": { "field": "embedding", "query_vector": [/* ... */], "k": 50, "num_candidates": 200 } }

],

"rank_window_size": 50,

"rank_constant": 60

}

}

}

rank_window_size is how many results to pull from each child retriever before fusion. rank_constant is k.

Azure AI Search

Azure AI Search uses RRF as the only hybrid scoring method - no normalization alternative is exposed. RRF runs automatically when a query has both a search clause and vectorQueries. See Microsoft Learn's hybrid search ranking page for the documented behavior; k is fixed by the service and not user-configurable.

MongoDB Atlas

MongoDB introduced the $rankFusion aggregation stage for native hybrid search. $rankFusion is available on MongoDB 8.0+; running it with $vectorSearch inside the input pipeline requires 8.1+. A typical query:

db.products.aggregate([

{

$rankFusion: {

input: {

pipelines: {

searchPipeline: [

{ $search: { index: "default", text: { query: "wireless headphones", path: "title" } } }

],

vectorPipeline: [

{ $vectorSearch: { index: "vec", path: "embedding", queryVector: [/* ... */], numCandidates: 200, limit: 50 } }

]

}

},

combination: { weights: { searchPipeline: 1, vectorPipeline: 1 } }

}

},

{ $limit: 10 }

])

Per-pipeline weights let you bias one retriever over another without abandoning the rank-based approach.

pgvector and PostgreSQL

pgvector itself doesn't ship RRF - you write it as a CTE. The pattern is two CTEs (lexical via ts_rank, vector via the <=> distance operator), ROW_NUMBER() to assign ranks within each, a FULL OUTER JOIN on document id, and a sum of reciprocals:

WITH lexical AS (

SELECT id, ROW_NUMBER() OVER (ORDER BY ts_rank(tsv, plainto_tsquery('wireless headphones')) DESC) AS rn

FROM products

WHERE tsv @@ plainto_tsquery('wireless headphones')

LIMIT 50

),

vector AS (

SELECT id, ROW_NUMBER() OVER (ORDER BY embedding <=> '[...]'::vector) AS rn

FROM products

ORDER BY embedding <=> '[...]'::vector

LIMIT 50

)

SELECT

COALESCE(l.id, v.id) AS id,

COALESCE(1.0 / (60 + l.rn), 0) + COALESCE(1.0 / (60 + v.rn), 0) AS rrf_score

FROM lexical l

FULL OUTER JOIN vector v ON l.id = v.id

ORDER BY rrf_score DESC

LIMIT 10;

The COALESCE(..., 0) handles documents that appear in only one list. SingleStore and other SQL-flavored systems use the same pattern.

Weaviate

Weaviate's hybrid query supports both rankedFusion (RRF) and relativeScoreFusion (a normalized variant). rankedFusion was the default starting in v1.20; the default switched to relativeScoreFusion in v1.24. RRF is still available by setting fusionType: rankedFusion.

Tuning RRF in Production

A few decisions matter more than the rest.

Rank window size. This is how many results to pull from each retriever before fusion. 50 to 100 per retriever is the common range. Documents outside the window contribute 0, so a true positive that lives at rank 80 in one list and rank 5 in the other will get half the score it deserves if your window is 50. Larger windows mean more recall and higher latency - benchmark before settling on a number.

Weighted RRF. The basic formula treats every retriever equally. The weighted variant Σ_r w_r / (k + rank_r(d)) lets you bias one retriever when you have evidence it's systematically better. MongoDB's $rankFusion exposes weights directly; Weaviate's hybrid query has an alpha parameter that interpolates between dense and sparse; OpenSearch supports per-retriever weights through the normalization processor. Don't reach for weights until you've measured - the equal-weight default is usually fine.

Evaluation. Build a small labeled set (50-200 queries with judged documents) and measure NDCG@10, Recall@k, and MRR. Sweep k and rank_window_size. Watch for distribution shift: RRF's stability advantage shows up most when one retriever's score distribution drifts (a fresh embedding model, a reindexed corpus, a new analyzer chain). A score-tuned hybrid setup that beats RRF on the dev set can lose to it on next quarter's traffic.

Common pitfalls. Forgetting to deduplicate across lists - some clients do it for you, some don't. Different document id schemes between lexical and vector indexes, which makes the join lossy. Setting k too small and amplifying noise from whichever retriever happens to produce a flaky #1.

Frequently Asked Questions

Why is k = 60 the default? It's the empirical sweet spot Cormack et al. found on TREC data in 2009. Subsequent benchmarks across information retrieval and modern hybrid search consistently find k ∈ [40, 80] performs comparably, and most vendors picked 60 for that reason.

Can RRF combine more than two retrievers? Yes. The formula sums over any number of rankers. Practical examples include BM25 + dense + sparse (e.g., SPLADE), or text + image + metadata retrievers in multimodal search. The math doesn't change; you just add another term.

What about documents that appear in only one retriever's results? They contribute the reciprocal-rank from that one list and 0 from the other, so they're penalized relative to documents present in both. That's exactly the behavior you want from a hybrid ranker.

Do all retrievers have to return the same number of results? No. Set rank_window_size generously enough that the true positives fall inside the window for each retriever. If one retriever returns 30 results and another returns 100, the missing positions just contribute 0.

Is RRF deterministic? Yes, given identical input lists. Non-determinism in production usually traces back to upstream retrievers - distributed BM25 with tied scores, approximate vector search with shifting graph state, or analyzers that depend on cluster routing.

Key Takeaways

- RRF combines results from multiple retrievers by summing

1 / (k + rank)across lists. It operates on ranks, not scores, which is why it's robust to mismatched score distributions. - The default

k = 60comes from the Cormack-Clarke-Büttcher 2009 paper and has held up across nearly two decades of benchmarks. Tune within[40, 80]if you have labeled data. - RRF is the default hybrid search method in OpenSearch, Elasticsearch, Azure AI Search, MongoDB Atlas, and Weaviate. pgvector requires a SQL CTE; ParadeDB exposes it natively.

- Score normalization (min-max, L2) can edge out RRF when both retrievers produce calibrated scores on the same scale - an uncommon situation. RRF wins on stability and operational simplicity.

- For RAG pipelines, a typical recipe is to retrieve top-50 from each retriever, RRF-fuse, and pass the top-10 to the LLM.

If you're building hybrid search or a RAG pipeline and want a sanity check on your fusion strategy, get in touch - we work with engineering teams on exactly this.