A cross-encoder reranker is a second retrieval pass that jointly encodes query and candidate to score relevance precisely. It is the cheapest, highest-leverage fix for RAG systems where vector search returns the right answer somewhere in the top 50 but rarely at rank 1.

A cross-encoder reranker is a second retrieval pass that takes a query and a candidate passage, runs them through a transformer with full attention across both, and returns a single relevance score. Drop one between your vector store and your LLM, and a typical production RAG pipeline sees NDCG@10 lift commonly in the 5-15 point range, and 20+ on lexically hard datasets, for under 200 ms of added latency. That is not a marginal improvement. That is the difference between a RAG system that hallucinates and one that cites.

This post covers why first-stage retrieval alone is not enough, how cross-encoders differ from the bi-encoders behind dense search, the 2025-2026 reranker landscape, how to measure lift on your own data, and how to wire a reranker into an OpenSearch pipeline without blowing the latency budget.

Why First-Stage Retrieval Is Not Enough

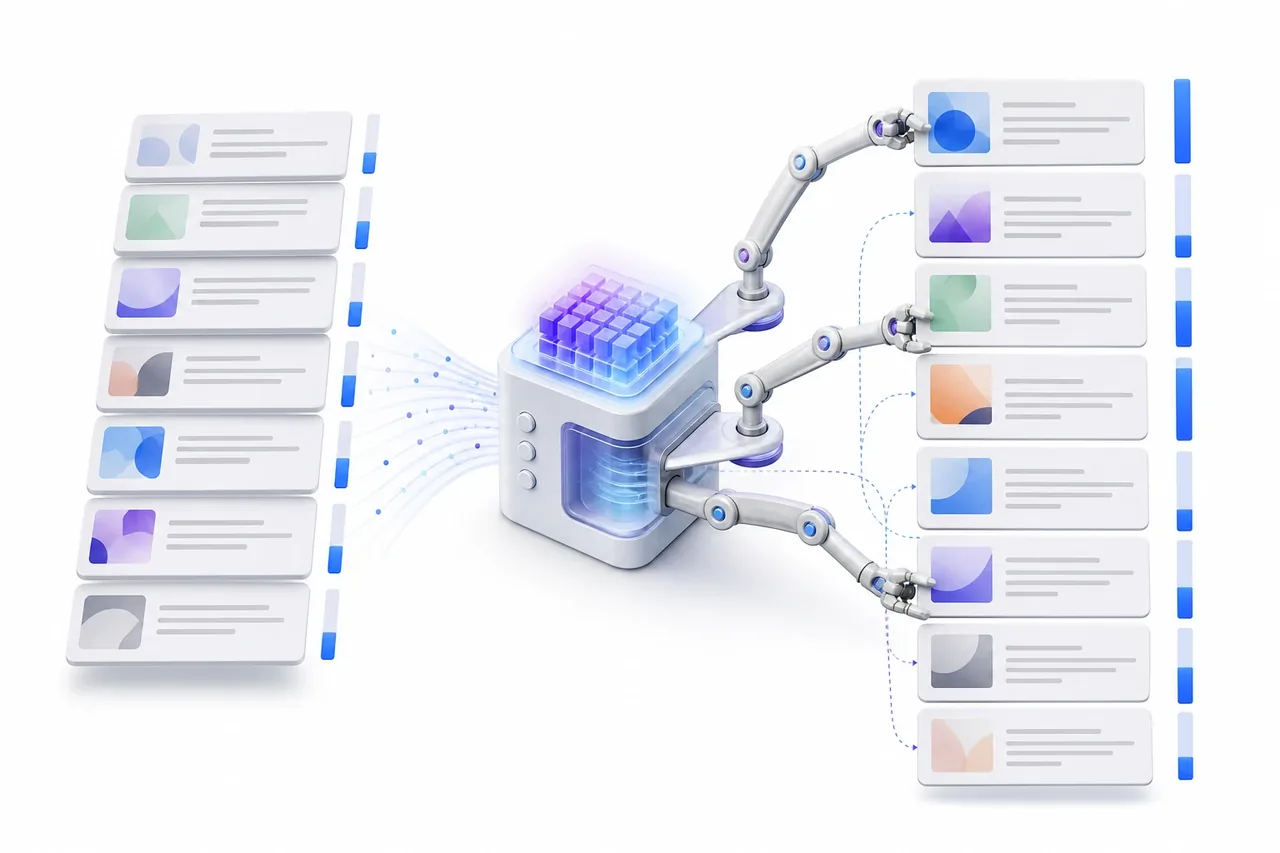

Vector search is built for recall. A bi-encoder embeds queries and documents independently, then approximates nearest neighbours in vector space - the architecture is designed to surface the right answer somewhere in the top 50 to 200, not to put it at rank 1. That trade-off is fine when a human is browsing a result page. It is brutal when an LLM is reading the top 3 to 5.

Three failure modes are responsible for most of the gap between recall@100 and precision@5:

- Chunk boundary artifacts. A sentence that answers the query lands in chunk A; the supporting context lands in chunk B. Neither chunk scores high alone because each is missing half the signal.

- Embedding collisions. Negations, conditionals, and near-paraphrases collapse into nearby points in vector space. "Refunds are issued within 14 days" and "refunds are not issued after 14 days" can sit close enough to confuse a top-k cut.

- Lexical gaps. Rare entity names, IDs, error codes, and product SKUs are exactly the tokens dense embeddings struggle with - the very tokens users care most about. Sparse retrieval helps here, but does not solve the precision problem on its own.

Most production RAG systems feed three to ten passages into the LLM context window. One bad passage is enough to trigger a hallucinated answer or a hedge. Precision@5 is what determines answer quality. Recall@100 is what makes precision@5 achievable.

Cross-Encoders vs Bi-Encoders, Explained

A bi-encoder encodes the query and each document independently into fixed-size vectors, then compares them with cosine similarity or dot product. The encoding work for documents happens once at index time. At query time, only the query is encoded; comparison is a single matrix multiplication. This is what makes ANN search over millions of vectors return in tens of milliseconds.

A cross-encoder takes a different route. It concatenates query and document into a single input sequence and runs full self-attention across both. The output is not a vector you can index - it is a single relevance score. Every token of the query attends to every token of the document, and vice versa, so the model captures negations, conditionals, fine-grained semantic match, and lexical-semantic interactions that single-vector similarity flattens away.

The cost is asymmetric. A cross-encoder forward pass is roughly 10x to 100x more expensive per query-document pair than a bi-encoder similarity computation. Scoring 1M documents with a 22M-parameter cross-encoder at ~5 ms per pair takes around 83 minutes. That is why cross-encoders cannot be the first stage. The standard pattern is a two-stage cascade:

query

↓

[ stage 1 ] bi-encoder + BM25 hybrid, ANN over the full corpus

↓ top 50-100 candidates

[ stage 2 ] cross-encoder rerank, full attention

↓ top 3-5 passages

LLM context

Stage 1 optimizes for recall. Stage 2 optimizes for precision. Total latency stays under 300 ms while the precision gain comes from where it actually matters.

A late-interaction model like ColBERT sits between these two architectures. ColBERT stores a small embedding per token rather than one vector per document, and computes a MaxSim score between query tokens and document tokens at query time. It is faster than a full cross-encoder because there is no joint forward pass, and it captures more than a single-vector bi-encoder because the matching is token-level. The trade-off is index size: per-token embeddings inflate storage by 1-2 orders of magnitude.

The Reranker Landscape in 2025-2026

The choice between hosted and self-hosted is the first decision point. Hosted reranking gives you predictable latency and zero infrastructure; self-hosting buys you data residency, custom domain fine-tuning, and a flat amortized cost at scale.

Cohere Rerank 3.5

Cohere ships Rerank 3.5 as a hosted API with strong multilingual coverage and long-context support. The current public model name is rerank-v3.5. Pricing as of early 2026 is around $2.00 per 1,000 search units, where one unit is a query plus up to 100 documents and any document over 500 tokens is auto-chunked. It is also available through Bedrock and Oracle's Generative AI service for teams with cloud-procurement constraints. For most teams, this is the fastest path to measurable lift.

BGE Reranker v2 family

BAAI publishes the BGE reranker v2 family under Apache 2.0:

bge-reranker-v2-m3- the multilingual workhorse, built on the bge-m3 base, the default starting point for most self-hosted setups.bge-reranker-v2-gemma- LLM-based on gemma-2b, higher quality on English, slower.bge-reranker-v2-minicpm-layerwise- LLM-based on MiniCPM-2B with layer-wise scoring; pick the layer band that matches your latency budget.bge-reranker-v2.5-gemma2-lightweight- newer and lighter than v2-gemma; worth benchmarking against v2-m3 if you are English-first.

The FlagEmbedding repo is the canonical source.

Jina Reranker v2

jina-reranker-v2-base-multilingual is a cross-encoder with Flash Attention 2, support for over 100 languages, function-calling and code-aware training, and a 1024-token context. Jina reports it processes documents around 15x faster than bge-reranker-v2-m3, which makes it a strong candidate for developer-tools and code search where throughput matters as much as accuracy.

ColBERTv2 and RAGatouille

ColBERTv2 (Santhanam et al., 2021) is the late-interaction reference. It can replace both retriever and reranker in some pipelines, at the cost of larger indexes. RAGatouille is the Python wrapper that makes it usable without reading the original CRC papers.

Sentence-Transformers cross-encoder baselines

cross-encoder/ms-marco-MiniLM-L-6-v2 is a 22M-parameter MS MARCO baseline that is English-only but extremely fast. It is the right model to prototype with - if you cannot move the needle here on a small labeled set, your eval is broken before you have spent a cent on a hosted API.

Decision matrix

| Reranker | Hosting | Params / size | Languages | Max doc tokens | Typical latency (top-100) | License | Cost model |

|---|---|---|---|---|---|---|---|

| Cohere Rerank 3.5 | API | not disclosed | 100+ | ~4096 | ~150-300 ms incl. network | Proprietary | ~$2 / 1k searches |

| BGE Reranker v2-m3 | Self-host | 568M | Multilingual | 512-1024 | ~80-200 ms on A10/L4 | Apache 2.0 | GPU amortization |

| BGE Reranker v2-gemma | Self-host | 2B (gemma-2b) | English-first | 512-2048 | ~200-400 ms on A10/L4 | Apache 2.0 (gemma) | GPU amortization |

| Jina Reranker v2 | API or self-host | base | 100+ | 1024 | ~30-100 ms on GPU | Apache 2.0 / API | API or GPU |

| ColBERTv2 (via RAGatouille) | Self-host | base | English-strong | varies | Sub-100 ms with PLAID | Apache 2.0 | Storage + GPU |

| MS MARCO MiniLM L-6-v2 | Self-host | 22M | English | 512 | ~50-80 ms on A10 | Apache 2.0 | CPU/GPU |

Latency numbers depend heavily on batch size, passage length, and exact GPU. Treat them as ballparks, not benchmarks.

Measuring Reranker Impact

You cannot ship a reranker without an eval set. Vibes will not tell you whether the model is helping, hurting, or quietly regressing on the long tail.

A workable starting point is 100 to 500 real production queries with top-20 retrieved passages labeled relevant, partially relevant, or irrelevant. Domain experts give the cleanest labels; LLM-assisted labeling (with manual spot-checks on 20% of the set) scales when expert time is scarce. Store the result as query → [(doc_id, grade)], version it, and treat eval data as code.

Four metrics cover most decisions:

- NDCG@10 - the primary ranking-quality metric, position-weighted.

- MRR@10 - how high the first relevant result lands; useful when you serve a single answer.

- Recall@k - the sanity check that reranking is not silently dropping relevant docs by collapsing them out of the candidate pool.

- Hit@k - did at least one relevant doc make the top-k cut?

Always report before-and-after on the same candidate set so the only variable is the reranker.

Retrieval metrics are necessary but not sufficient. End-to-end answer quality is what users care about. The RAGAS framework gives you faithfulness, answer relevance, and context precision scores. The TREC RAG 2024 track is the closest thing to a community benchmark methodology. Pair the retrieval metric with at least one downstream metric - the gap between "we retrieved better passages" and "users got better answers" is real.

For shipping, gate behind a feature flag. Route 5-10% of traffic through the reranked path, log both ranked lists, and compute per-query lift on the metrics you committed to. Watch out for domain shift: an off-the-shelf reranker trained on MS MARCO may underperform on legal, medical, or code data. Fine-tuning on 1k-5k labeled domain pairs is a common path to an additional few NDCG points.

Latency, Cost, and When Reranking Hurts

Reranker latency is dominated by candidate count, passage length, and model size. As a rough budget on an A10 / L4-class GPU:

- MiniLM-class (22M): 50-80 ms for 100 docs at 256 tokens.

- BGE v2-m3 (568M): 80-200 ms for 100 docs at 512 tokens.

- BGE v2-gemma (2B): 200-400 ms; quantization or smaller candidate pools usually required.

CPU inference is generally not viable for real-time reranking at top-100. Truncate passages to 256 to 512 tokens, batch all candidates in a single forward pass for GPU efficiency, and watch for very short passages (under 50 tokens) producing unstable scores - pad them with metadata or surrounding context.

The cost math is straightforward. Cohere at ~$2 per 1k searches with 100 docs each is predictable and removes ops overhead. Self-hosting a 568M model on a spot A10 at $0.50-$0.80/hr breaks even somewhere around 500-1,000 queries per hour, depending on utilization. The hidden cost on the self-host side is the engineering time for deployment, monitoring, and model updates - that is the line item that decides build versus buy more often than the GPU bill.

Reranking does not always help. It hurts on:

- Single-keyword or exact-match queries where BM25 already nails rank 1.

- Corpora under ~1,000 documents where vector search top-k is already high precision.

- Hard real-time paths with a sub-50 ms total latency budget.

Tuning the candidate pool size is the highest-leverage knob. The sweet spot is 50-100 candidates; gains plateau by 200. A weighted fusion of first-stage and reranker scores often beats reranker scores alone, especially on lexically hard queries where BM25 carries useful signal the cross-encoder underweights.

Implementing Reranking with OpenSearch

The pipeline shape is straightforward:

query

↓

OpenSearch hybrid search (BM25 ∪ kNN, normalized fusion)

↓ top 100

Reranker (Cohere API or self-hosted BGE)

↓ top 5

LLM prompt

OpenSearch supports a rerank search processor that intercepts the search response and rescores it. The cross-encoder approach uses the ml_opensearch rerank type with a model deployed via ML Commons. There are first-class tutorials for Cohere Rerank and for SageMaker-hosted cross-encoders.

For an external reranker, the wiring looks like:

- Store the API key in the OpenSearch keystore.

- Create an ML Commons connector pointing at the rerank API.

- Register a model that uses the connector and capture the model ID.

- Define a search pipeline with a rerank response processor referencing that model ID.

- Attach the pipeline to your search request via the

search_pipelineparameter.

A typical pipeline definition (abridged):

PUT /_search/pipeline/rerank-pipeline

{

"response_processors": [

{

"rerank": {

"ml_opensearch": { "model_id": "<model-id>" },

"context": { "document_fields": ["chunk_text"] }

}

}

]

}

The query then sets "params": { "query_text": "<query>" } so the processor knows which text to compare against the document field.

For self-hosting a BGE reranker, the most flexible path is a thin FastAPI service in front of a PyTorch (or vLLM, for the 2B-class models) inference loop. Expose a /rerank endpoint that takes a query and a list of passages and returns scores. Wire it into OpenSearch via a custom connector pointing at the internal endpoint. A single A10 typically handles 50-100 QPS at top-100 rerank depth at 512-token passages.

Two operational habits separate well-run reranker deployments from the rest:

- Cache by hashing

(query, doc_id)to a score with a short TTL. Head queries (the 20% of queries that account for 80% of traffic) get most of the benefit. Invalidate on document update. - Log the query, candidate doc IDs, first-stage scores, reranker scores, and final ranks. Alert on average reranker score dropping (a corpus drift signal) and on p99 latency exceeding the budget. A "rank improvement of clicked doc" dashboard is the simplest health metric.

Advanced Patterns

A few patterns earn their complexity once the basic two-stage cascade is shipped:

- Two-stage reranking. A fast cross-encoder (MiniLM) cuts top-100 to top-20 in ~60 ms; a heavier model (BGE-gemma or an LLM judge) finalizes top-5 in another ~200 ms. Worth it when accuracy requirements are strict (legal, medical, compliance).

- Filters before rerank. Apply hard filters (date range, ACLs) before reranking to shrink the candidate set. A soft recency boost applied as a multiplier on the reranker score handles time-sensitive corpora cleanly.

- Query-dependent routing. Classify intent with a small classifier and route - code queries to Jina, multilingual to Cohere, easy English to MiniLM. Reduces cost without sacrificing accuracy on the queries that need it.

- Combine with query rewriting and HyDE. Each stage compounds. HyDE (Hypothetical Document Embeddings) drifts on hallucinated pseudo-answers, and reranking is the cheapest correction.

Decision Checklist and Next Steps

A short five-question check decides whether a reranker is the next investment:

- Are users reporting irrelevant or hallucinated answers despite recall looking healthy?

- Is top-5 precision noticeably lower than top-50 recall?

- Do you have more than ~5,000 documents in your corpus?

- Can you spend 100-250 ms more per query?

- Do queries involve nuance (negations, conditions, jargon) rather than exact-match lookups?

Three or more "yes" answers, and a reranker will likely move the needle.

The cheapest first experiment is a hosted swap-in. Sign up for Cohere Rerank, drop it between retrieval and the LLM, and measure NDCG@10 plus answer correctness on a 50 to 100 query labeled set before and after. That is a weekend of engineering work, and the typical outcome is a measurable lift inside the 5-15 NDCG@10 range. If the lift is not there, the eval is wrong, the corpus is too small, or the failure mode lives upstream in chunking or query understanding.

From there, the work compounds: better chunking gives the reranker better candidates, query rewriting gives it better queries, and an LLM observability stack gives you the data to fine-tune on real traffic.

Key takeaways

- A cross-encoder reranker scores query-document pairs jointly, capturing nuances that bi-encoder similarity flattens. It is the cheapest, highest-leverage RAG fix when first-stage retrieval has good recall but poor top-k precision.

- Expect NDCG@10 lift commonly in the 5-15 point range, and 20+ on lexically hard datasets, for under 200 ms of added latency on a small-to-mid model.

- For most teams, Cohere Rerank 3.5 is the fastest path to lift; BGE v2-m3 is the strong self-hosted default; ColBERTv2 via RAGatouille is the late-interaction middle ground.

- Build the eval set first. Without labeled queries and NDCG@10, you cannot tell whether the reranker is helping or quietly regressing.

- OpenSearch supports reranking natively via the rerank search processor and ML Commons; wire your reranker into the search pipeline rather than into application code.

If you are running a RAG system on OpenSearch and the answers feel "almost right," reranking is usually where to look first.