genai Blog Posts

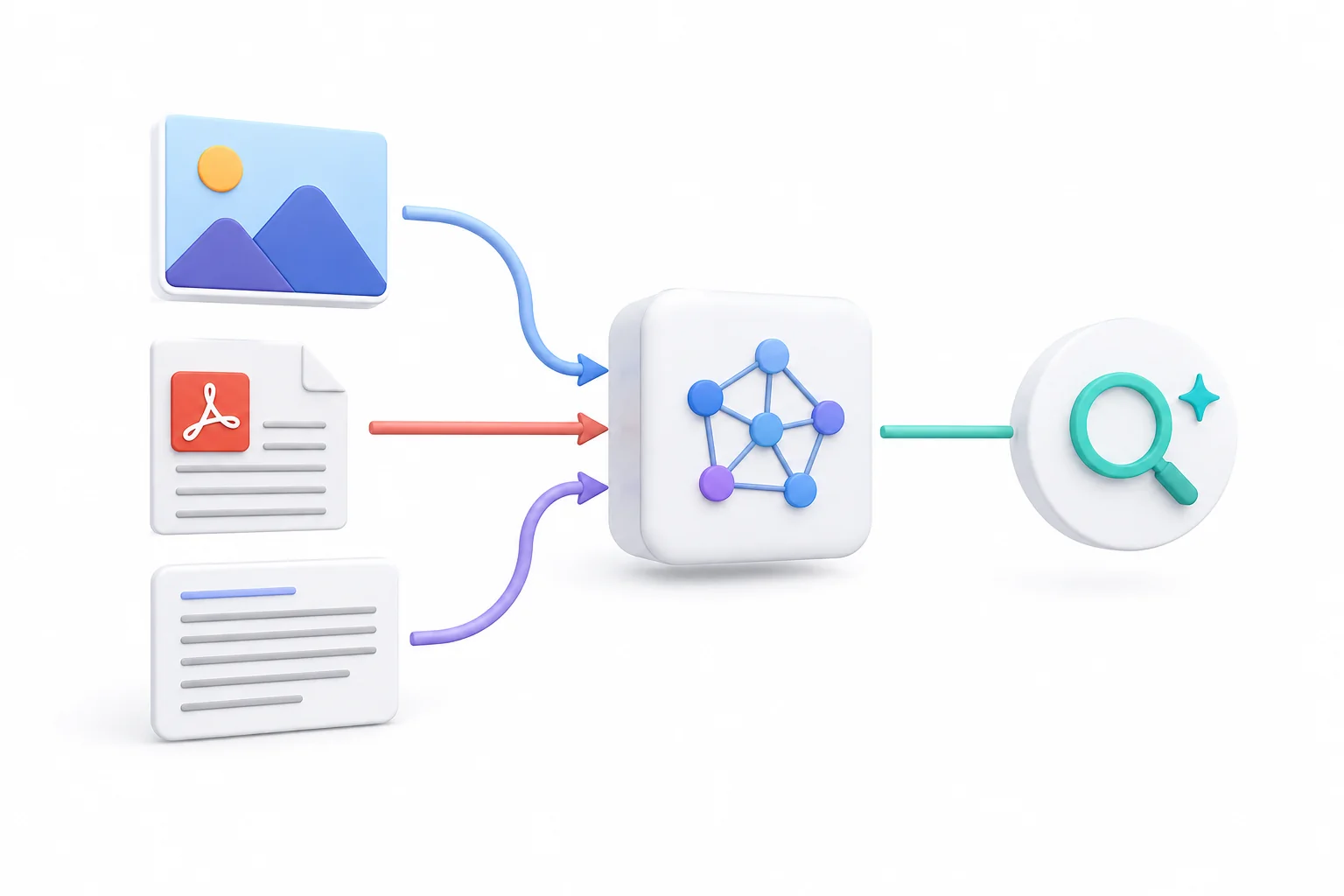

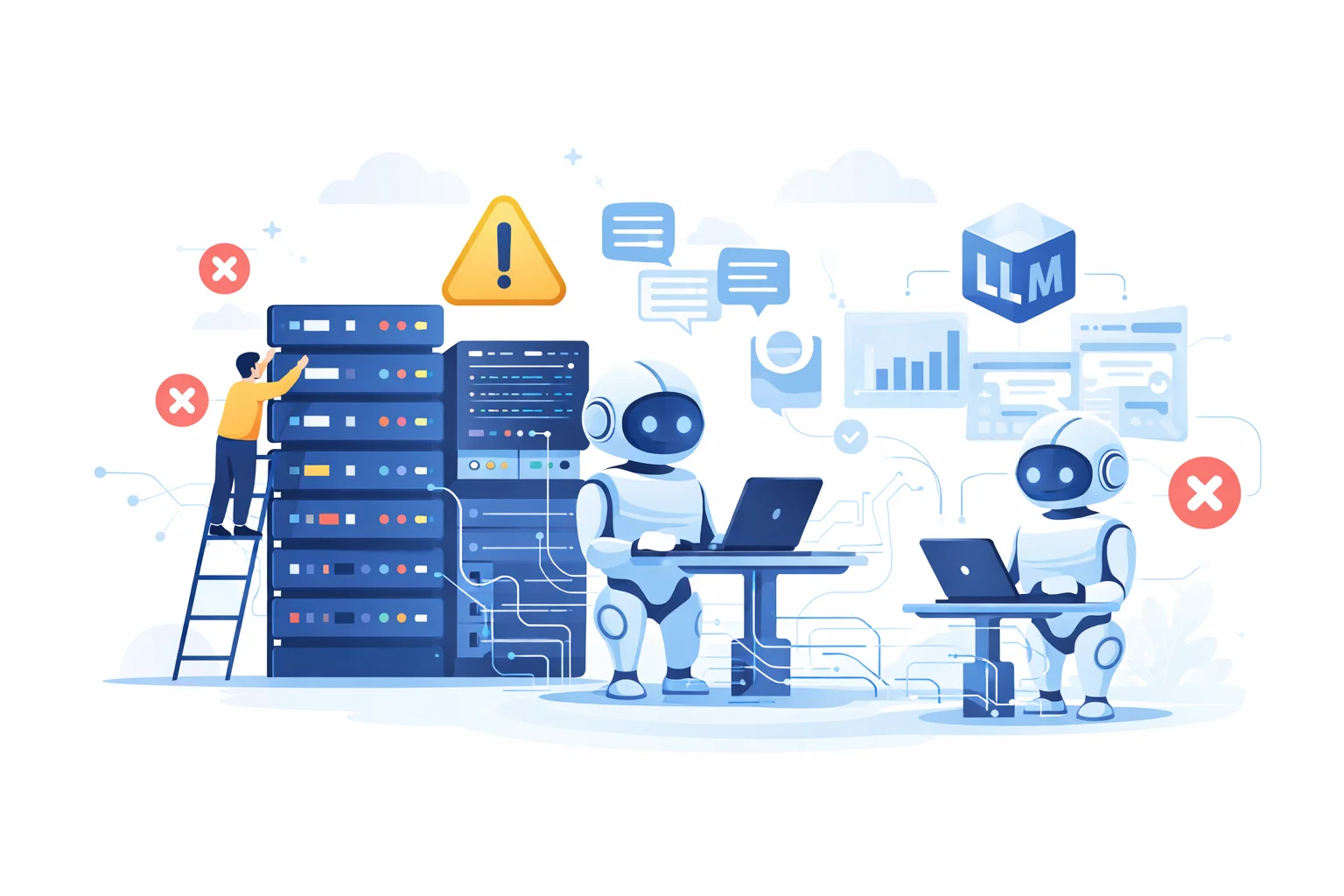

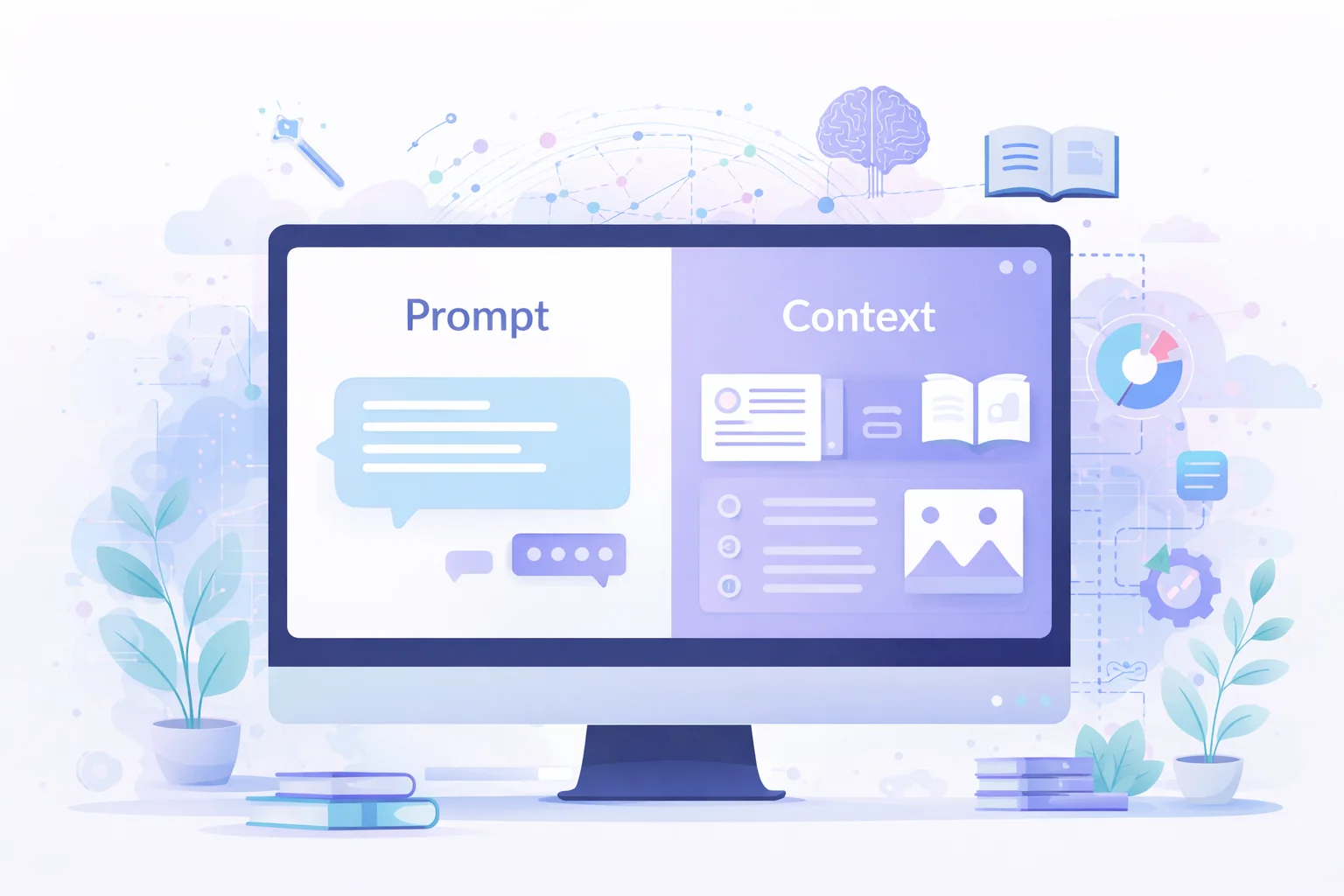

Generative AI moves fast, but production challenges are engineering problems: latency, cost, evaluation, and knowing when not to use an LLM at all. From prompt engineering and fine-tuning to RAG pipelines, agentic workflows, and model evaluation, we cover what actually works in production — not just what works in demos. The teams that succeed with GenAI are the ones that treat it as a system engineering challenge, not just an API call. Our articles focus on architecture decisions, retrieval quality, observability, and the tradeoffs between different model and infrastructure choices. Our agentic workloads consulting and RAG fast track help teams build reliable AI applications on their existing data infrastructure.